Hardware visionary Mary Lou Jepsen’s next act is to read your mind—no implants required.

In 1996, when Mary Lou Jepsen was in the home stretch of a Ph.D. in engineering at Brown University, she became so ill that eventually she concluded her only option was to drop out of school and move back home to die. Her sickness was a mystery. She had all the signs of AIDS, but she was HIV negative. Her body was covered in sores. She was getting worse every day.

Right about the time that Jepsen was running out of hope, she got an MRI scan. There, in that magnet-made map of her body’s water and fat, the problem was obvious: She had a brain tumor.

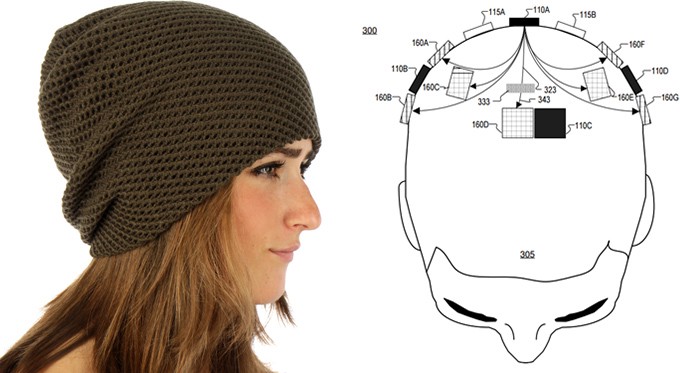

Jepsen underwent surgery to remove the tumor, finished up her Ph.D, and went on to become a hardware visionary at the One Laptop Per Child project, a low-power-display company called Pixel Qi, and then at Google and Facebook. Last year she left Facebook to start Openwater, a company intent on developing a “wearable MRI.”

One purpose for such a device would be to dramatically lower the cost and widen the availability of the diagnostic technology that saved her life. But that’s not all. Back when she was a masters student at the MIT Media Lab, Jepsen worked on a team that built the first holographic video system. A hologram isn’t so much an image as it is a detailed map of light waves. If you shine a laser at something, you can record how the light scatters when it hits the object, and then use that information to reconstruct the object in three dimensions. Jepsen’s team figured out how to compute those light patterns at the speed of video.

Decades later, Jepsen is betting this holographic technology can be used in her wearable MRI to not just map but also interact with the brain. As she said in a TEDx talk in San Francisco last October: “It can allow us to connect our brains directly to the computer, so we can download and upload thoughts and enhance our thinking in profound ways. The stuff of science fiction.”

Jepsen is one of many technologists who are heralding brain-computer interfaces that would make communication happen at the speed of thought. In March, Elon Musk announced a new venture, Neuralink, aimed at developing a brain implant within the next decade that would allow humans to communicate telepathically with one another and augment their own intelligence with the help of AI. Last month, Facebook followed suit with plans to develop a brain-computer interface of its own, this one a gadget that sits atop the skull and allows users to post to the social network using thought alone. There’s also Kernel, backed by a $100 million investment from payments entrepreneur Bryan Johnson to build a brain-computer interface capable of upgrading human intelligence.

It’s On! The Race to Wire the Brain

Academic researchers have labored for decades to connect brains and computers, with only halting progress. But now Silicon Valley wants to give this work a jolt by applying the hacker ethos to the body’s wetware. And unlike academic research, which has largely focused on designing devices to restore damaged hearing, sight, and movement to people with disabilities, the tech industry hopes to appeal to the average consumer — or at least the average tech geek — by streamlining the communications links from our brains to machines and to other people.

“I see this unfolding in less than a decade,” Jepsen told me from the road while promoting her new endeavor. “A lot of people think it’s much further out.”

I n 1973, a UCLA computer scientist named Jacques Vidal coined a term that at the time seemed pretty damn science-fiction: “brain-computer interfaces.” Soon, he hypothesized, human brains would connect directly with machines. This, in turn, would birth a bold new era, one where humans could communicate and move things — even spaceships! — using their minds.

Thanks to advances in technology, he wrote at the time, “one may suggest such a feat is potentially around the corner.” Indeed, within a few years, Vidal’s lab had achieved the first proof that it was possible, in a landmark trial that used EEG signals from the human brain to move a cursor through a two-dimensional maze.

Since then, however, few brain-computer interfaces have made it past the point of laboratory experimentation. Systems that work outside the brain don’t actually accomplish that much. And ones that go inside the brain are still expensive, cumbersome tangles of wires that work unreliably — that is, when they even work at all. It seems unlikely that you’ll plug into the Matrix to download a black belt in karate any time soon.

Let’s start with an idea that’s more invasive than what Jepsen is proposing — implants in the brain, like Musk’s “neural lace” concept. Putting electrodes in the cortex has in fact progressed somewhat. Remember last fall, when Barack Obama fist-bumped a mind-controlled prosthetic arm?

Even so, we still know comparatively little about how the brain’s 100 billion neurons operate and interact. “Basic science is the biggest thing missing from these conversations,” says Andrew Schwartz, a professor of neurobiology at University of Pittsburgh School of Medicine.

For decades, Schwartz has been working on developing an implant to allow paralyzed people to not only move a robotic limb naturally via brain signal, but also to “feel” the sensation of movement. “There are more than 30 years of underlying research into how movement is encoded in the brain and then translated into shoulder, elbow, wrist, and finger movement,” he says. “After all those years, so far we have gotten our implant to work in two patients. Two.” After decades of research, in other words, the technology is still extremely nascent.

“You’re not going to pin electrodes in every part of the brain.”

Gerwin Schalk Albany Medical College

The underlying technology dates back to the 1920s, when researchers discovered that the brain conveys information via electrical signals that could be recorded using electrodes. Brain-machine interfaces exploit the way that information travels. When you move your arm, neurons in your motor cortex fire away. For a paralyzed woman to move a prosthetic arm, electrodes implanted in the motor cortex must intercept those signals, process them, and then translate them into movement with the prosthetic.

But first you have to know what those signals mean, and how they work. Learning that requires a lot of trial and error, even for physical movements. Mapping the neuronal activity of individual thoughts would be much tougher. “I’m interested in restoring dexterous hand function. But I have no idea how that even works,” Schwartz says. “We want to use BCIs to improve intellect, but we don’t know what intellect is.”

The brain implant used most often in such research is a silicon electrode array that is 20 years old. Such an implant simply isn’t capable of decoding enough neural activity at once to, say, communicate telepathically. Musk’s idea is to leapfrog that technology by instead injecting the brain with thousands of tiny silicon motes that transmit information using acoustic vibrations. That prospect is little more than theoretical.

“We don’t have the technology to measure thoughts at the speed of communication. We don’t even know what kind of technology we need,” says Gerwin Schalk, a pioneer of the field at Albany Medical College. “You’re not going to pin electrodes in every part of the brain.”

Not to mention that when implants do work, they typically lose their effectiveness after a few years. Brain chemicals and tissue scarring begin to interfere with an electrode’s signal. Sometimes they stop working altogether. All this makes it hard to imagine a near future when we green-light voluntary brain surgery. The complexity of neuroscience has already caused Kernel to shift away from one of its lofty ambitions, creating a “memory implant.”

From Jepsen’s perspective, consumer brain-computer interfaces are never going to happen if it means cracking open someone’s skull and putting something inside it. She is particularly critical of Musk’s approach. “That’s based on a science-fiction book,” she says. “There certainly are lots of implants working out there. But for this to really happen, it has to be noninvasive and it has to be removable.”

There has been intriguing progress in brain-computer interfaces that don’t require opening up the skull to implant electrodes. Researchers have developed systems that let people tweet by thought alone or compose music using brainwaves.

Such systems, though, are more limited than they might seem. Banging out the message “USING EEG TO SEND TWEET” required a person to first don a Frankenstein-esque electrode cap, then select each letter of the tweet individually by moving a cursor around on a screen with their mind. To go from typing out a 140-character tweet with a thought to, say, an email or a Facebook post would require deciphering added layers of complexity, such as your intentions of what you planned to write in each different field of a post.

Facebook’s plan, disclosed in April at its F8 event, is a riff on the experiment in which people tweeted with their thoughts. Facebook wants to create headgear capable of beaming photons through the skull and reading what bounces back, decoding neural activity based on how cells reflect light.

Facebook says it hopes to let people turn thoughts into text at a speed of 100 words per minute. The current brain-typing record is 12 words a minute. And that was achieved by implanting electrodes inside the brains of volunteers and having them painstakingly peck out each letter with a mind-controlled cursor, rather than translating thoughts directly into words. There are lots of similar projects that also rely on actually implanting electrodes within the brain. That’s because, as several scientists told me, this is probably going to be necessary for getting detailed, accurate neural information.

That gets to the reason Jepsen left Facebook to start Openwater: She doesn’t think Facebook’s mind-reading technology will go far enough. As she understands it, Facebook’s plan requires a very fast, expensive camera that does not have the ability to scan deep inside the body. And even if Facebook could lower the cost, “with that approach all they can ever do with it is silent speech,” she says. “My technology is better. My system uses the speed of components in cameras and cell phones to get four inches of depth through the brain. It’s a hundred times the depth with a hundred times the resolution. Their technology is only skin deep.”

While Jepsen is calling her technology a “wearable MRI,” that’s not really what it is — after all, she is not proposing using magnets to make images of the brain. It would use near-infrared light to scan changes in blood-oxygen levels in the brain—and use the principles of holography to render that data in images as detailed as what an MRI machine produces.

“If I put you in an MRI machine, I can tell you the images in your brain right now, or whether you’re in love.”

Mary Lou Jepsen Brown University

Already, near-infrared light has been used in combination with super-fast cameras to capture information in the brain. But the fact that the body’s tissues naturally scatter light makes it difficult to record information much deeper in the body with very high resolution. Think about what happens when you shine a laser pointer on your finger. Instead of the laser beam just coming out the other side, the light scatters, making your finger glow red. This is where Jepsen’s holograms would come in. If you could record that scattered light and send it back through the body, you could theoretically make a high-resolution, three-dimensional map of what’s going on inside.

Jepsen plans to start using her improved version of MRI technology for medical diagnoses, but the ultimate goal is, in classic Silicon Valley parlance, “a moonshot to telepathy.”

For evidence, she cites research performed at Berkeley a few years ago, when researchers put people into MRI machines and showed them movie clips. The researchers were able to convert the MRI readouts of the test subjects’ brains into fuzzy, imprecise renditions of the movie clips. “If I put you in an MRI machine, I can tell you the images in your brain right now, or whether you’re in love,” Jepsen says. With the ability to continuously scan the brain in even greater detail, she argues, we’ll be able to decipher far more.

It gets even wilder. Depending on how you focus beams of light into the brain, you may be able to not just “read” but also “write,” in order to, say, irradiate a tumor or even upload a thought. The possibilities, she readily acknowledges, are both empowering and frightening. As the Openwater website notes: “At the extreme we believe we can change neuron states, ideas, and memories with this method.”

Jepsen proposes that her innovation will be as much in the manufacturing process as it is the science. She plans to make the holographic LCDs that power her technology in the same Asian factories that make our phones and computers so cheap.

Then again, Openwater has no published papers yet. Jepsen says she’s still working on the patents. She also hasn’t disclosed how much funding she has raised.

When I told Thomas Witzel, a biomedical engineering expert at Harvard, that Openwater plans to have a product ready in just a few years, he laughed. “We have never seen any kind of technology with the spatial resolution or penetration depth she’s talking about,” he says. “It’s plausible, but I’ve never seen it done.” He adds: “Leveraging the tech industry’s manufacturing process could lead to a lot of progress in this space, but there’s limitations in the physics that still require more work.”

However, David Boas, who researches optical imaging of the brain at Harvard, told me that talking with Jepsen has converted him from a skeptic.

“When I first started hearing from colleagues about what Mary Lou Jepsen was proposing, I, too, viewed the claims as grandiose,” Boas says. “I have now come to the realization that aspects of her vision are possible.” With significant investment, Boas says, LCD technology could be modified to dramatically improve our ability to image through human tissue. “Theoretically, this is possible, although I am still trying to get my head around how deeply we could in fact get the light to focus inside the body.”

Even Boas, though, says he’s unsure whether such an innovation might one day turn us all into mind-reading autodidacts. From where we sit now, it’s hard not to dismiss that part of the idea as tech industry braggadocio.

Jepsen, though, exudes only confidence. She says she plans to have a prototype in the next year and to be producing millions of devices in less than five years.

“The physics is quite solid,” she told me. “It’s not stark raving at all. This is very doable.”

This story was updated after publication to clarify Jepsen’s characterization of Facebook’s technology.